Entropy and Utility Value

- Jul 23, 2022

- 9 min read

Updated: Jan 12, 2024

Maximizing the usefulness of energy to get what we want

Often, entropy is colloquially misunderstood as mere chaos. However, this concept holds a different, more nuanced meaning in thermodynamics. Entropy essentially describes the dispersion of energy within a system and indicates the unavailability of this energy for doing work.

Fair warning: I use the word entropy 75 times in this article and sound like a broken record. That's because it's important.

Entropy and Energy Quality

There's an inverse relationship between entropy and the quality of energy. A system with high-quality energy exhibits low entropy, signifying a greater potential for performing work. This concept is crucial in understanding thermal energy in heat engines, which rely on the Carnot cycle. This cycle is fundamental in the functioning of power plants and car engines. The efficiency of a heat engine is dependent on the temperature difference between the hot source and the cold sink. With a constant cold sink, a hotter source translates to higher efficiency due to its superior energy quality and lower entropy.

Fossil Fuels and Entropy in Heat Engines

In most heat engines, we burn fossil fuels to create extremely hot sources, using the ambient air as the cold sink. The capacity to increase the temperature of these hot sources is limited by the material properties of the engines. Thus, in burning fossil fuels, we convert high-temperature, low-entropy thermal energy into other forms. While energy conservation is guaranteed by the First Law of Thermodynamics, this conversion comes with a universal cost: an increase in entropy.

The Second Law and Electricity Production

The Second Law of Thermodynamics states that any spontaneous process results in an increase in the entropy of the universe overall.

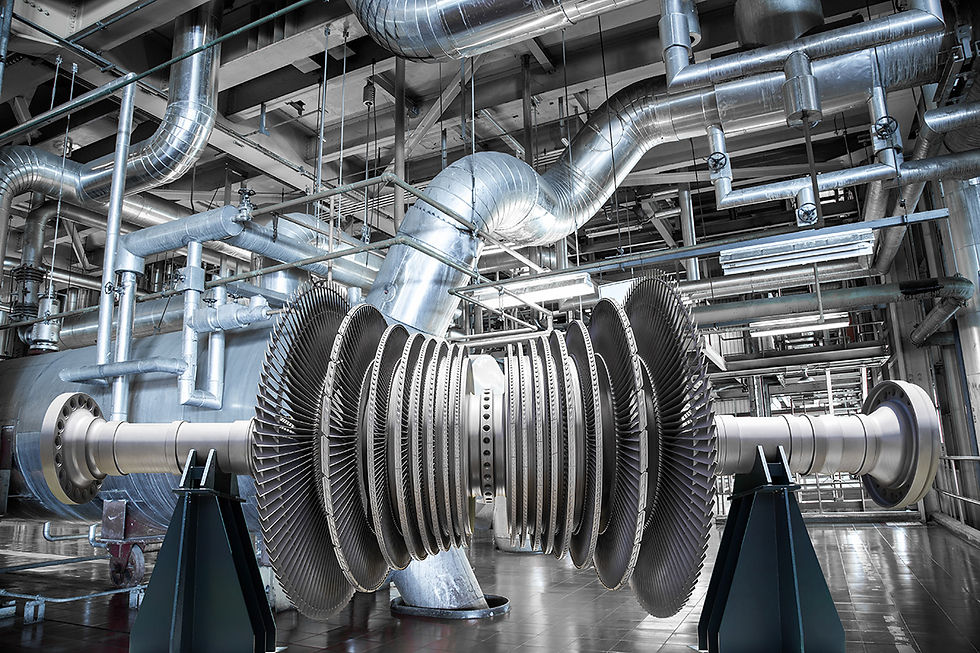

Let's take an example: producing electricity. We start with a lump of coal. Coal is basically a reservoir of chemical energy. When we combine coal with heat and oxygen, a chemical reaction called combustion takes place that produces a lot of heat. We use that heat to boil water and produce steam. We have converted chemical energy in the coal to thermal energy in the steam. In the process, we have lowered the entropy of the steam.

But here's the catch. We have increased the entropy of the coal by an even greater amount, resulting in a net increase in the entropy of the universe. Now we take that high-temperature, low-entropy steam and run it through a turbine that spins a generator to produce electricity. Now we have converted thermal energy into electrical energy. The electrical energy is very low entropy, but we have created even more entropy overall since the temperature of the steam has decreased and become closer to the surrounding temperature. Besides that, there is friction within the turbine's bearings, and eddy currents that end up as heat are created within the generator. Crucially, this is low-quality, high-entropy heat from which little to no usable energy is available to do work.

In our electricity generation process, we have lowered the entropy of some parts of the system but at the expense of increasing the entropy of other parts of the system by a greater amount. No process in the universe can occur such that overall entropy decreases. Local abatements in entropy are always accompanied by even greater increases in entropy elsewhere.

This idea is critical to civilization, and one whose far-reaching implications I feel are significantly underappreciated.

Entropy in Different Systems

If two things are at the same temperature, they are said to be in thermal equilibrium. This is the point of maximum entropy, and thermal energy is not available to do useful work between them. But it's not just the temperature of something that has entropy. Entropy can be defined in other ways. A weight raised above the ground has lower entropy than a weight on the ground since when that weight is lowered to the ground, its gravitational potential energy is available for conversion into work. A pressurized air tank has low entropy, whereas when that tank is empty (that is, at the same pressure as the surroundings), the entropy of that system is at its maximum. Entropy degrades the usefulness of energy.

Our Primary Source of Low Entropy Energy

In a future where enough time has elapsed, Earth would eventually reach a state of maximum entropy where everything is at a uniform temperature, similar composition, height, pressure, etc. However, we are fortunate to have a continuous influx of low-entropy energy in the form of electromagnetic radiation from a massive nuclear fusion reactor approximately 94 million miles away: the Sun.

This low-entropy energy from the Sun is vital as it sustains life on Earth. Yet, with every transformation of this energy, there's an increase in entropy and a corresponding decrease in energy quality.

Entropy Creation in Daily Processes

For human use, the most advantageous forms of energy are those with the lowest entropy, since more things can be done with it. However, generating such low-entropy energy sources typically results in significant increases in entropy elsewhere.

Consider driving a car fueled by gasoline. As you accelerate to 70 mph, the entropy of both you and the car decreases as your velocity and kinetic energy increase and diverge from that of your surroundings. Yet, this process simultaneously produces a substantial amount of entropy, primarily as heat. This heat manifests in various forms - from the combustion byproducts exiting the exhaust, heat dissipation through the radiator, friction within the drivetrain, and even the heat generated by air drag around the car. When you apply the brakes to slow down, the kinetic energy of the moving car is transformed into high-entropy heat within the brake pads and rotors. Although the energy itself doesn't vanish, its increased entropy renders it non-recoverable for practical work.

Another example is the operation of a freezer. Inside a freezer, a refrigeration system transfers heat from the interior to the exterior. When the internal temperature falls below 32°F, water freezes into ice. This process, driven by a compressor that is powered by electricity (a form of low-entropy energy), results in more heat outside the freezer than what was initially removed from within it. This is because the electricity itself is converted into heat, transitioning from low-entropy electrical energy to high-entropy thermal energy. Thus, even though we produce low-entropy ice, the overall entropy increases in the process.

Some Questions

This brings up a few questions:

Is all energy use the same?

Does it always produce the same amount of entropy?

Is using 1000 joules (a unit of energy) to heat our house the same as using 1000 joules to drive to the store? What about using 1000 joules to make a cold drink?

Are there different ways of using that 1000 joules for the same end goal that generate different amounts of entropy?

Let's say we want a cold drink. We put some water inside an ice tray that goes into the freezer to make ice cubes. The longer the freezer runs, the more electricity it will use and the more entropy it will generate. After the freezer runs for a while, it will move enough heat out of the water to freeze it. It has to move the heat from the water plus the heat from the air inside the freezer to the outside of the freezer. But it doesn't just have to remove the heat that started inside the freezer. Once heat starts to be removed from inside the freezer, a temperature difference is created between the inside and outside. That means thermal energy will begin to flow into the freezer through its walls at a rate that is proportional to the temperature difference, the surface area, and the resistance to heat flow (R-value) of the freezer walls. For the water in the ice tray to ever have a chance of freezing, the freezer must remove more heat than what is flowing into it through the walls. The more heat flows into the freezer, the more electricity is required to remove it, and the more entropy is generated.

Now, to generate less entropy in creating this ice, we need to use less electricity. We need to limit the amount of heat that flows into the freezer. We could use a smaller freezer (reducing surface area), or we could increase the resistance to heat flow of the walls of the freezer by insulating it better. Doing either of these things will allow us to get the same end result we desire, ice for our drink, but generate less entropy in the process.

Same utility value, less entropy.

Remember, entropy describes the unavailability of energy to do work. If we generate less entropy in the process of making ice for a cold drink, we are left with more electricity to do other useful work we also desire.

Fossil Fuels: High-Quality Energy

Modern humans have been gifted with pockets of very, very low-entropy energy on Earth. We call these fossil fuels and burn them to convert the energy into other forms to improve our lives. In the process, we generate lots of entropy. These low-entropy fossil fuels are created over millions of years, so we can consider them finite.

Much like the freezer example, not all fossil fuel use is the same in terms of the utility value to humans and the entropy it produces in its conversion to other forms.

3 Ways of Using Natural Gas

Let's take natural gas, for example. If we burn a cubic foot of natural gas outside in the open, we convert it to heat, dissipating into the surrounding air. We have not extracted anything useful, so its utility value is zero.

Next, we take that cubic foot of natural gas and burn it inside the combustion chamber of a power plant to spin a generator to make electricity. 40% of the energy in the gas ends up as electricity we can use, and 60% is dissipated to the surroundings.

That electricity then goes to our homes for us to use. Let's say we use this energy to power our television to watch a documentary on CuriosityStream. That electrical energy powers the electronics in the television to produce light and sound in a way that we value. Some of that energy is converted to heat in the circuitry in the television, and even the light energy and sound energy are converted into small amounts of heat when they hit things in the room. Of course, you can't feel this heat because the thermal energy is so dispersed; its entropy is so high that the temperature rise in any one location is imperceptible. That thermal energy can never be recovered to do useful work.

In this case of burning natural gas in a generator to produce electricity to power our television, we have extracted a human utility value from that gas of about 40% before all the energy is eventually converted to high-entropy dispersed heat.

Here's the interesting part. In both examples, burning natural gas in the open and burning it in a power plant, we generated the same amount of entropy. The energy in the natural gas eventually spread out into high-entropy thermal energy and has become incapable of doing work. In one case, we got no utility value; in the other, we got about 40% utility value – for the same cost in entropy.

Finally, let's take it a step further. Now we burn that cubic foot of natural gas in a power plant, make electricity, and watch CuriosityStream again, but with a twist. It's winter. It's cold. You want to be warm. You can plug in an electric heater and convert some electrical energy to thermal energy, but that would take away some of the electricity you were planning on watching CuriosityStream with. What if instead of dispersing the heat to the surroundings at the power plant, we pumped that heat to your house through a heat network? If half of that heat makes it to your home, representing 30% of the total energy in that cubic foot of natural gas, 30% more energy in the original fuel is contributing to your utility value. We've generated the same amount of entropy as in the first two examples, but now our utility value is around 70%. In other words, we can make use of 70% of the energy in the fuel in order to get things we want.

Couldn't we just send that 30% extra energy we used as heat in the third example to the house in the form of electricity? Sadly, no.

Different energy conversions that humans want require different energy qualities. Generating electricity efficiently in a typical power plant requires high energy quality, whereas heating your home does not.

The thermal energy left over after operating the heat engine in the power plant is low-quality and no longer able to produce electricity. That energy is either dispersed into the environment or can be used for heating homes. The latter case is called cogeneration with district heating.

An Extrapolative Aside:

If every conversion of energy involves an increase in entropy overall, then eventually, won't the entropy of the universe keep going up and reach a maximum? Yes. This is called the "heat death" of the universe – when entropy is at maximum, and there is no more energy that can bring about physical changes – anywhere – like, ever.

Conclusion

Perhaps one of humanity's key goals should be to maximize the function of Utility Value ÷ Entropy. In so doing, we can improve the lives of humans and other species and do the least damage to the environment we live in as we pursue the things we want.

Questions for you:

In your daily life at home or work, where can you get the same utility value while generating less entropy?

How can we apply this thinking to have a meaningful impact on the world?

Why do you think the vast majority of people don't talk about entropy when they talk about humanity's energy use?

P.S. Want a refresher on the Basics of Thermodynamics?

P.P.S. There's another related thermodynamic quantity that's crucial to economics and humanity's energy landscape, which we will cover in a future article.

Comments